Introduction

Every governance system produces decisions. Very few remember them.

Proposals in most organizations, whether they're blockchain protocols, parliaments, or community-driven collectives, are treated as standalone events. A proposal is drafted, debated, voted on, and then it disappears into an archive. The text might still be searchable, but the context surrounding it is gone. Who wrote it? What problem was it responding to? Did it reference or contradict an earlier decision? Was it the third attempt at solving the same issue? That information either scatters across platforms or lives in someone's head until they leave.

Most governance systems keep records. The failure is that records are not contextualized memories. A transcript tells you what was said. It does not tell you whether the same tension resurfaced two cycles later under a different name, or whether the person who brokered consensus held more influence than anyone with a formal title. Records capture activity. Memory captures context, outcomes, patterns, and power.

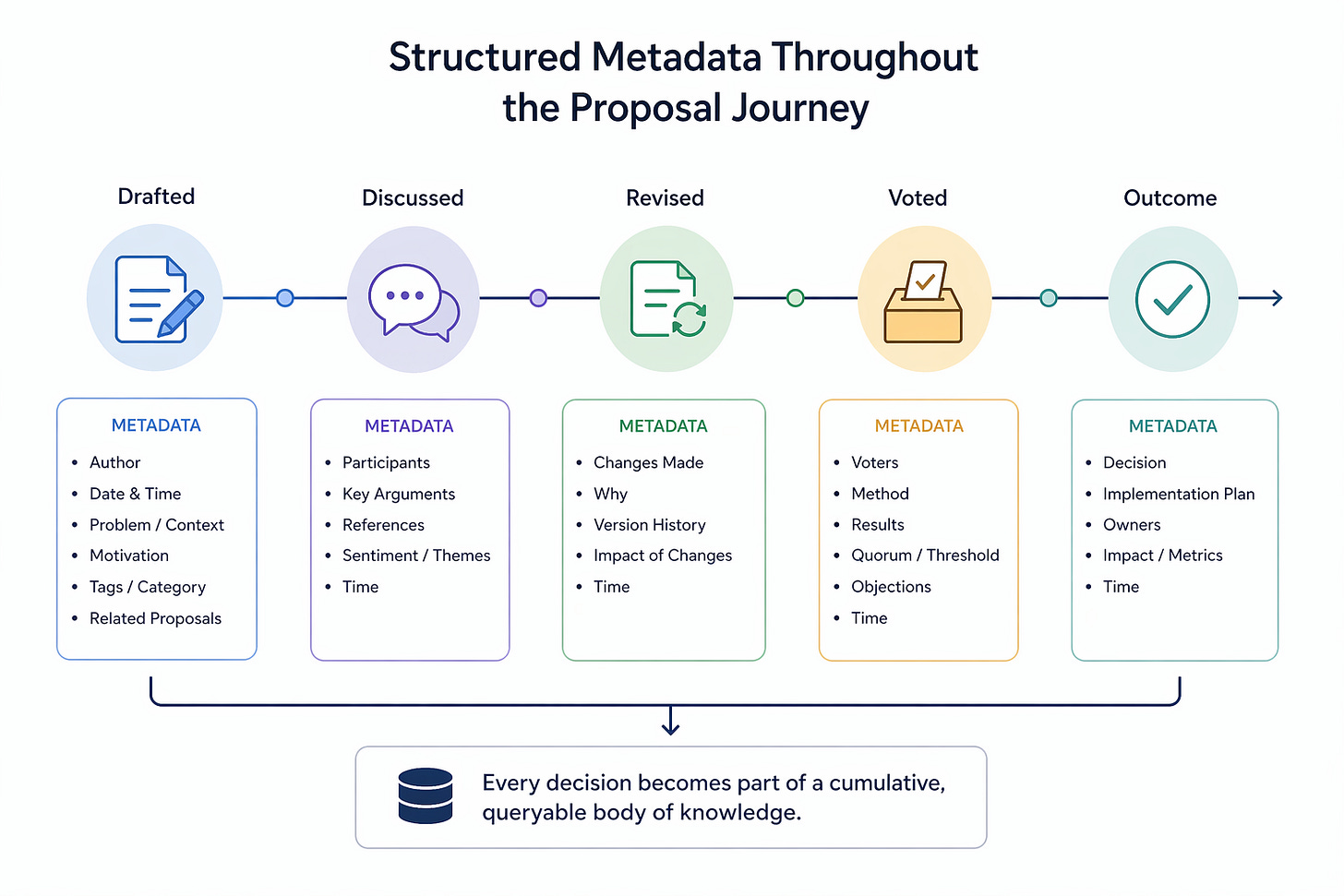

Proposal Lifecycle Metadata is a structure for closing that gap. Metadata is data about data: the information that describes, categorizes, and contextualizes something. A photo on your phone has metadata attached to it: the date it was taken, the location, and the device that captured it, even though none of that appears in the image itself. PLM applies the same principle to governance decisions. It embeds structured metadata throughout a proposal's entire journey, from first draft to final outcome, so that every decision becomes part of a cumulative, queryable body of institutional knowledge rather than a one-off vote that fades from view. The Governance Memory System takes the PLM concept and implements it as a software component. The framing informs one's choice of data to pull from to get the most accurate context surrounding the decision points that the broader system focuses on.

The Group Chat Problem

You already understand PLM intuitively. You've just never had to formalize it.

Think about a group chat with your friends. Someone throws out an idea for the weekend. “Let's go snowboarding.” That suggestion, informal as it is, carries metadata whether or not anyone writes it down. Who proposed it? When? What's the cost? How far is the drive? Does everyone have the gear? Is there someone in the group who has zero interest in snowboarding?

Now someone else counters: “What about a hike instead?” That proposal has its own characteristics. It's cheaper. It's more accessible. The one friend who just had knee surgery might struggle with it, but everyone else is in. A third person suggests a bar crawl, which solves the cost and logistics problem but doesn't work for the two people in the group who don't drink.

Every option affects the group members differently. Each proposal has constraints, trade-offs, and downstream consequences that touch different stakeholders in different ways. And the group leader, the person who actually coordinates the logistics, is doing informal PLM in their head: weighing who proposed what, what's worked before, what fell apart last time, and what's most likely to get alignment from enough people to actually make it happen.

Over time, the group builds rapport and shared memory. You stop spending thirty minutes debating what to do because you already know. Hiking works for almost everyone. That brewery with outdoor seating accommodates non-drinkers and dog owners. Saturday mornings are better than Sunday afternoons because two people in the group have standing commitments. Decision-making becomes faster and smoother because the group has accumulated enough history to know where alignment lies. You only burn energy on deliberation when someone wants to switch things up, or when a new person joins and shifts the group's preferences.

This is PLM operating in the background of everyday social life. The difference is that when you're coordinating a weekend with six friends, the stakes are low. If someone's preferences get overlooked or last month's failed plan gets repeated, the worst outcome is mild annoyance (or someone leaving the group chat if they're extra dramatic).

When There's No One Keeping Track

When the same dynamics play out inside institutions, the stakes change entirely. Now you're coordinating decisions that affect democracy, public funds, people's livelihoods, and wellbeing. The informal memory that a friend group builds through shared experience has to be replaced with a structured, persistent, queryable infrastructure. Because at the institutional scale, you can't afford to rely on someone remembering how last time went.

The Jersey City Board of Education is a case study in what happens when that infrastructure doesn't exist. In early 2026, the JCBOE approved a $1.1 billion budget that included a tax levy increase variously described as 2.01%, 15%, 17%, or 20%, depending on which official, which source, and which methodology you consulted. All four numbers were technically defensible. None of them lined up. Three other public school districts in the same county, Bayonne, North Bergen, and Hoboken, published clear tax hike figures alongside their budgets. Jersey City did not. Residents wanted to understand how their money was being spent and where the previously collected money had gone. The problem was that the Board's public records made this nearly impossible. Property taxes increase regardless, affecting renters, homeowners, and business owners, and the burden is exacerbated by tax abatements that luxury buildings receive in the city to avoid paying property taxes. So the residents should have the right to understand how their dollars are being allocated and how they affect their children.

Of 484 unique spending commitments totaling $514.9 million approved over the prior two years, only 25 signed contracts were attached, covering just 15 of those commitments and $39.2 million in spending. That means 97% of contract approvals lacked the actual agreement, and 92% of the dollars approved were committed without a signed contract on the public record. The attached documents were mostly internal purchase order forms, not the contracts themselves. The district's independent auditors found the same documentation gaps in two consecutive fiscal years, with 10 findings repeating from one year to the next.

Think back to the group chat. Imagine the group planned a trip six months ago. Everyone chipped in, the group leader handled the logistics, and the trip happened. Since then, the group has done a few more outings, each time with the leader collecting money and coordinating. But nobody kept track of what was spent, which costs came in over budget, or whether that Airbnb deposit from the last trip ever got refunded. Now the group leader sends a message: “Next trip is going to cost everyone 17% more than last time because I covered certain excursions out of pocket.” The first question anyone would ask is: “Where did the money from the last few trips go?” That's the question Jersey City residents were asking. And the public records couldn't answer it.

A static version of PLM was built to investigate the situation: software that tracked every contract from proposal through approval, amendment, and payment, flagging when required documentation was missing at any stage. The results revealed patterns that would be invisible without structured metadata.

The most telling example was the “amendment chain gap.” When the Board awarded special education transportation routes in June 2024 through Resolution 10.18, a $20.25 million umbrella contract spanning 17 vendors, a per-route spreadsheet listing every company, route, and rate was attached to the original meeting. But every subsequent amendment to that contract, and there were many, only referenced the resolution number from that earlier meeting without re-attaching the vendor list. A resident reading a later amendment would see “additional funding for special education transportation routes” with no way to know who those vendors were without manually digging through a different meeting from months earlier. The same pattern showed up across the SY2025/26 umbrella (Resolution 10.16, $23.54 million for 21 vendors) and across maintenance and facilities services, construction projects, and software renewals throughout the dataset. Special Education funding works a bit differently, but the main idea of amendment chains breaking spans throughout many categories the JCBOE deals with, making spending harder to understand and track from the outside.

In a PLM system, every amendment is linked to its parent contract. When you look at a later modification, the system automatically shows the original award, the vendors, the rates, and what specifically changed. No digging. No broken chains. None of these connections was visible in the Board's existing public records. PLM made them visible by structuring the metadata across decisions and surfacing the relationships between them. The issue is that the district was running on shared memory without it being documented or easily retrievable, which is critical for any institution that interacts with the public and requires transparency.

Context-Portable, Not Context-Specific

PLM is not tied to any single type of organization. The Jersey City investigation proved that the same structural logic that tracks a blockchain protocol proposal through Draft, Review, Last Call, and Voting can track a public contract through solicitation, Board approval, amendment, and implementation. The same metadata that flags a repeated blockchain proposal flags a repeated audit finding. In a parliamentary context, PLM tracks bills rather than proposals, with fields such as sponsor, co-sponsors, committee assignments, readings, and whether the bill amends existing legislation. The taxonomy changes. The principle remains the same: embed structured metadata throughout the decision's lifecycle so nothing disappears after the vote.

What PLM Actually Tracks

PLM provides a structured lens for understanding how any given topic fits into its larger context. PLM applies that same researcher discipline to governance decisions. The metadata attached to each proposal is what reveals how individual decisions, small pieces of the puzzle on their own, connect to the big picture of a system's institutional memory.

To make this concrete, here's what metadata on a single proposal might look like:

Proposal: Community Fund Allocation for Developer Grants Program

- Author: Core contributor, 3 prior proposals (2 passed, 1 withdrawn)

- Type: Financial / Treasury

- Status: Passed, partially implemented

- Created: March 2024, Revised: April 2024 (scope reduced after community feedback)

- Discussion venues: Governance forum (primary), Discord (informal debate), X (public commentary)

- Rationale: Developer retention declining; grant program designed to fund 10 independent teams over 6 months

- References: Builds on Proposal #47 (developer incentive framework), responds to concerns raised in Proposal #62 (treasury sustainability audit)

- Implementation status: 6 of 10 grants disbursed, program paused pending budget review

None of these fields are exotic. But without them captured in a structured way, this proposal becomes a single vote record in an archive. With them, it becomes a node in a web of decisions: connected to the proposals it built on, to the people who shaped it, to the discussion that refined it, and to the outcomes it did or didn't produce. That's the difference between a record and a memory.

Now, scaling that across every proposal an organization produces:

Who proposed it, what is their role, and what is their track record with past proposals? This is author credibility: the role, the track record, and enough context for voters and reviewers to assess the source.

What domain does the proposal affect? PLM tags proposals by type (technical changes, financial decisions, meta-governance mechanisms, operations, and so on). The specific taxonomy adapts to the organization. A blockchain protocol might categorize by treasury, protocol development, and structure. A parliament might categorize by subject area, whether it amends existing law, and whether it's a constitutional matter. The point is consistent classification so that proposals can be grouped, filtered, and compared across time.

When was it created, revised, and enacted? Temporal data lets you see how long decisions take, whether certain types of proposals move faster than others, and whether revision patterns signal controversy or refinement.

Where did the discussion happen? Governance conversations often span multiple platforms: formal forums, social media, group chats, and in-person meetings. PLM tracks discussion venues so that the deliberation record isn't fragmented across places no one thinks to check later.

Why was it proposed? The rationale, problem statement, and referenced issues tie the proposal to the conditions that gave rise to it. Without this, future participants have no way to evaluate whether the original reasoning still holds.

How did it evolve? Edit history, amendments, and voting outcomes capture the proposal's trajectory. A proposal that passed unanimously on the first try tells a very different story from one that was revised four times and passed narrowly.

PLM also tracks implementation status: fully implemented, partially implemented, or abandoned. This is a simple field, but most governance systems never capture it. A proposal can pass and never get built, and without status tracking, no one notices.

Why This Matters

Without PLM, several problems compound over time. Proposals get repeated. The same idea gets raised, debated, and rejected, then raised again a year later by someone who wasn't around for the first round. The community burns time and attention re-discussing settled questions because there's no accessible record connecting the two attempts.

Accountability erodes. When there's no structured link between a proposer's track record and their current proposal, every submission is evaluated in a vacuum. Contributors who consistently propose initiatives that fail to deliver face no reputational consequence because the pattern is practically invisible.

Governance fatigue accelerates. When operational matters (routine maintenance, minor parameter changes) are mixed in with high-stakes governance topics, and there's no way to distinguish between them, voter attention gets diluted. Nobody wants to give feedback or make more decisions when they already have decision fatigue from their daily lives. Participation drops because people can't tell which decisions actually require their focus. PLM's categorization makes it possible to identify where attention is being spent versus where it's being wasted, and to justify shifting routine decisions to specialized working groups.

Precedent becomes invisible. Governance decisions don't exist in isolation. One proposal often sets the conditions, assumptions, or constraints for the next. PLM builds a dependency graph between proposals through explicit references and cross-citations, turning a flat archive into a navigable web of decisions. Without those links, you can't trace how one decision created the precedent for another, and you can't detect when a new proposal quietly contradicts something the community already agreed to.

Procedural safeguards become unenforceable. Some governance systems have rules like “a proposal identical to a previously rejected one cannot go to vote for three months.” Without structured metadata linking proposals to one another, a rule like that is aspirational at best. PLM is the infrastructure that makes procedural safeguards actually enforceable.

PLM Also Detects Its Own Obsolescence

PLM is also designed to detect its own obsolescence. Governance structures change over time, templates are updated, new roles are created, and discussions move to new platforms. Categories that made sense two years ago no longer reflect how the organization actually works. But PLM can't diagnose its own drift on its own. It needs a layer that checks whether the system's assumptions still match reality by reviewing what actually happened after decisions were made. That's Outcome Review Anchors, the next layer in the series, and the subject of the next essay.

The core insight behind PLM is that governance is not a series of isolated choices. It is a body of knowledge that either accumulates or gets lost. You already do this naturally with the people closest to you. PLM is what makes it possible at the scale where forgetting has serious consequences.

Sources

- Jersey City BOE Narrowly Approves $1.1B Budget; Officials Mostly Mum on Tax Hike — Hudson County View

- GMS Diagnostic: Jersey City Board of Education — OCC Research

Governance that remembers. Institutional Memory as a Service.

Have thoughts or feedback on this research?

Othman@occresearch.org